Modeling narrative and price in the AI ecosystem

It is not enough to know which narratives exist. What matters is how they are changing, whether price is validating them, and what kind of situation that creates for an analyst.

There is no shortage of information. On any given day, the AI ecosystem produces earnings commentary, partnership announcements, product launches, policy headlines, social-media conviction, valuation notes, and sharp price moves across a few dozen tightly linked names. The challenge is not finding more material. The challenge is understanding what is actually happening.

The same company can sit inside several overlapping stories at once. Some narratives are strengthening, others are fading, and others are being repeated long after the market has absorbed them. Price can confirm a narrative, ignore it, or run ahead of it entirely. A good thesis can be early, validated, crowded, or breaking — and those are very different situations for an analyst.

topicspace is an experiment in seeing those differences more clearly. The working hypothesis is that narrative change and price behavior contain useful information when read together, and that this is easier to see when the ecosystem is modeled as a field rather than a feed.

The problem: why AI narrative analysis is hard

Following the AI ecosystem as a financial analyst is less about keeping up with headlines and more about interpreting what is happening. A headline can be right and still arrive too late to be useful. A narrative can be right and already crowded. Reading more does not resolve either of those problems — in a domain this fast-moving, it can make them harder to see.

The questions that actually matter are situational:

Analysts do not struggle most with access to information. They struggle with timing, prioritization, and interpretation — and those are state problems, not retrieval problems.

By field, I mean the AI ecosystem treated as an interacting system — actors, active narratives, validation gaps, and price behavior considered together and in relation to one another. Not a list of companies. Not a stream of documents. A field view is an attempt to understand how those things are moving together over time.

The experiment: modeling the AI ecosystem as a field

The idea behind topicspace is simple: if AI is a fast-moving, multi-actor domain, then it should be modeled as one.

Most market tools give analysts one of two views. A document stream shows what was published. A price board shows what moved. Both are useful. Neither is enough on its own. A document stream does not tell you whether the story matters. A price board does not tell you what story the market is validating, ignoring, or already front-running.

topicspace is an attempt to work in the layer between those two: not just what was said, and not just what moved, but how the stories, actors, and price responses are currently interacting.

A field view tries to answer a different question. Instead of treating Nvidia, Microsoft, power demand, and AI chips as separate items in a feed, it asks whether they are part of the same active structure, whether price is confirming that structure, and whether the setup is early, crowded, or beginning to weaken.

In practice, the experiment focused on three things:

The key question is not “what happened?” It is “what state is this part of the market in right now?”

One lesson from the experiment: adding more information was not enough on its own. The analysis became more useful when different jobs were kept separate — historical context, current interpretation, recent change, evidence, and forward conditions. When those layers are blended together, the result tends to be repetitive and harder to evaluate. When they are kept distinct, the system becomes easier to read and the reasoning becomes more precise.

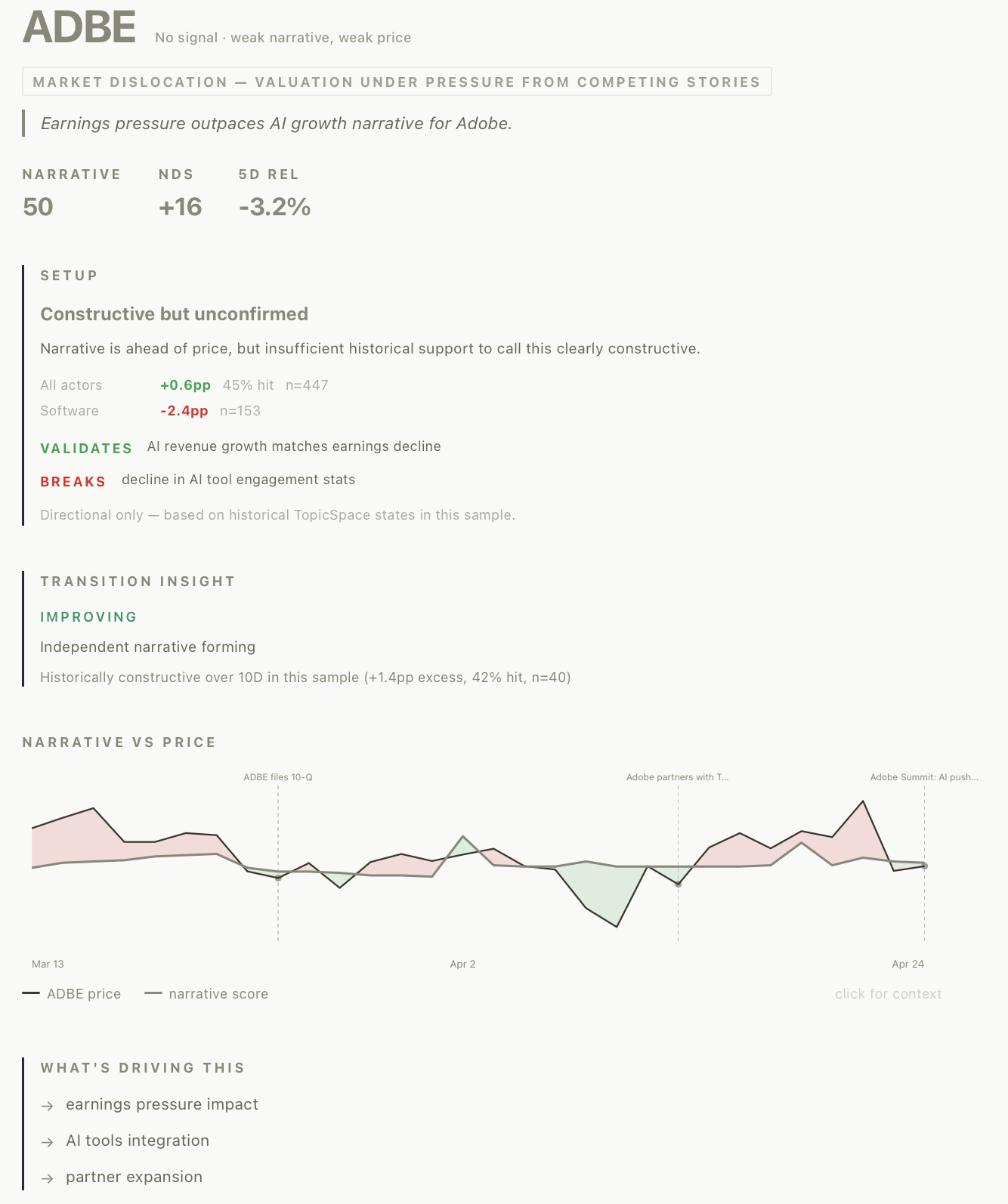

What “field state” means: narrative-price interaction

The working idea in topicspace is that narrative and price interact in recognizable states. An analyst does not just need to know whether a story exists. The more useful question is whether price is confirming it, lagging it, outrunning it, or rejecting it. Those are meaningfully different conditions, even if the underlying thesis sounds similar.

In this experiment, that relationship is represented through a small set of canonical narrative-price states.

These states are not a universal taxonomy. They are a practical language for distinguishing situations analysts already handle intuitively but often describe loosely. The state map below shows how states relate to each other and which transitions have been most meaningful in the research sample.

What we found: recurring patterns and transitions

The goal was not only to define states, but to see whether different states and transitions actually behaved differently over time. A few practical patterns emerged.

Narrative-leading states were stronger than price-led states

The clearest pattern in this sample was that setups where the narrative was ahead of price tended to behave better than setups where price had already outrun the story. That is useful because it creates a practical distinction between an idea that may still be early and one that may already be stretched. A thesis can still be right when price has moved too far, too fast. The state model helps separate those situations.

Crowded repricings were weaker

One of the most consistent caution conditions was price moving well ahead of narrative support. Not every price-led move failed, but in aggregate these setups were weaker and more fragile than narrative-leading ones. In practical terms, this is the “the story may still be true, but the setup is no longer clean” problem.

Early confirmation was freshness-sensitive

Early confirmation turned out to be most useful near entry. When that label lingered without follow-through, its value declined sharply. That matters because it shows that state labels are not enough on their own. Timing inside the state matters too. A fresh shift into confirmation is different from a name that has been sitting in that condition for a week without further validation.

Rejected downside split by sector

One of the strongest findings was that the same state did not mean the same thing everywhere. A rejection of negative narrative was constructive in semis and other non-fragile parts of the market. In software, fintech, and other fragile groups, the same general pattern behaved much more weakly. This was one of the most important practical lessons from the experiment: sector context is part of meaning.

Transitions often mattered more than static state presence

Some of the most useful signals came not from being in a state, but from moving into one. Narrative strengthening ahead of price, follow-through becoming confirmation, or urgency fading all added useful context that static labels alone missed. The ecosystem is not just a set of conditions — it changes through movement between them.

Why analysts should care

The value of this experiment is not that it replaces fundamental research. It is that it helps organize noisy, fast-moving information into states that are easier to interpret: which stories are building, which are being paid, which are already crowded, and which are beginning to break.

That matters because the same thesis can look very different depending on whether it is early, confirmed, ignored, or already stretched.

Separate thesis quality from setup quality

A thesis can be right and still arrive in a poor setup. A strong business argument is not the same thing as a strong current state. This is one of the most common mistakes in fast-moving sectors: being right on the story but late on the setup.

Distinguish loud narratives from validated ones

Some stories attract enormous attention without price confirmation. Others begin to be paid before the narrative is fully formed. That distinction matters because attention alone is not the same thing as validation.

Identify when price has already front-run the story

A narrative can still be true while the setup is already crowded. The model is useful here because it helps distinguish a strengthening story from a story that price has already run ahead of. That is often the difference between “interesting” and “actionable.”

Define what would validate or break the setup

Once the system has a current state, a setup type, and a recent transition, it becomes easier to ask the right next question: what would confirm this, what would undermine it, and what should be watched next? That does not replace analyst judgment. It makes the judgment task clearer.

The actor page combines historical benchmark context, current setup type, recent transition signal, and evidence as separate interpretive layers — each answering a different question.

How topicspace routes signal to analyst action

The system has three intervention points between raw data and analyst-facing output. Each is a place where field state shapes what the analyst sees and in what form.

Where topicspace fits

The market for financial research tools is crowded, but the categories are not all solving the same problem.

Some tools help analysts find and summarize evidence quickly across transcripts, filings, and news. Others turn unstructured text into signals such as sentiment, novelty, or event alerts. Others help monitor companies, sectors, and strategic developments more broadly.

Those are all useful jobs. They are just not the same job as the one topicspace is trying to do.

topicspace is less focused on retrieving more source material and more focused on representing the current narrative-price state of the field. The aim is not only to show what was said or what moved, but to help an analyst understand what kind of situation is in front of them right now.

That means asking questions like:

In that sense, topicspace sits between text intelligence and analyst interpretation — narrower and more interpretive than a research terminal, more situational than a news or sentiment feed.

What this does not do

It is worth being direct about what this is not.

This kind of model is only useful if it helps an analyst think more clearly — not if it pretends to make judgment unnecessary.

From headlines to field state

Financial analysts do not just need more information. They need better ways to understand what state the field is in.

In practice, a few things turned out to be consistently true: narrative-leading setups were stronger than price-led ones; transitions between states carried more signal than static presence; and the same state could mean different things depending on sector context. None of that replaces fundamental analysis. What it adds is a cleaner read on what kind of situation is in front of you.

It is not enough to know which narratives exist. What matters is how they are changing, whether they are being validated, and what state the field is in.